An Introduction to Logics

Since logic was one of the focuses of my studies and it is a subject every programmer needs basic knowledge about, I have decided to write about it. Actually a programmer needs to know only little compared to what I learned. I have decided to steer the middle course and write about a bit more than just the basics.

Written by Claus Volko

Vienna, Austria, Europe

Contact: cdvolko (at) gmail (dot) com

Homepage: www.cdvolko.net

1. History

Human beings have pondered over logics for many centuries. The old Greeks and, later, Medieval scholars tried to create lists of rules which logical conclusions were allowed and which not. The earliest example of this is the Aristotelian syllogisms. This was a list of rules what conclusions you were allowed to draw given certain premises. The Medieval scholars annotated these syllogisms with words. For example, "Modus Barbara" meant: "If all A are x and all x are y, then all A are y."

Actually from the view of a person who has a natural gift at logical thinking (like any good programmer does) these methods to memorize syllogisms appear to be a bit pathetic, since we are able to easily check whether a reasoning is sound and valid.

In the 18th through 20th centuries modern mathematical logics began to take shape, with the well-known work of George Boole (Boolean Algebra) and others (Gottlob Frege, Bertrand Russell etc.). Nowadays classical logic is well established, but there are also some extensions to express things that cannot be expressed in classical logic, such as temporary changes or possibilities. Moreover, there are some alternative approaches to logic which have different semantics.

2. Types of Logic

2.1 Classical Propositional Logic

Classical propositional logic is the most basic form of logic. The language of propositional logic allows you to express like "If statement A is true and statement B is false, then statement C is true".

In classical logic, any statement can be either true or false. There is no third possibility. If the validity of a statement is unknown, we simply cannot calculate with it.

Propositional logic is all most programmers need. It suffices for their purposes. There are various logical operators that can be used to make inferences. The most basic ones are negation, conjunction and disjunction.

There are various notations in logic. One common notation for negation would be to write NOT a. Another one would be to write ~a. The semantics are simple: If a statement is true, then its negation is false, and if a statement is false, its negation is true.

Conjunction is commonly written as a AND b or a ^ b or a & b or even a * b. The last notation especially makes sense if you follow Boole's proposition and assign 0 to falsity and 1 to truth. Then the result of conjunction is actually the product of the two truth values. Likewise, disjunction can be written as either a OR b or a v b or a | b or a + b. (NB: The | operator is used in programming languages sharing the syntax of C, but in philosophical essays it often has a different meaning, namely ~(a ^ b), also known as Sheffer's operator or NAND, see below. Always get informed about the meaning of the symbols when you read an article before making conclusions!)

The semantics of conjunction: The logical product is true if and only if both parameters are true. (Instead of "if and only if", I will write "iff" from now on.) The logical sum in disjunction, by contrast, is false iff both parameters are false.

Another widely used operator, which, however, is not supported by most programming languages, is implication, written as a -> b. The meaning of this is: If a is true, then b must also be true. This implies that if a is false, the value of b can be either true or false. Implication can be easily emulated by disjunction and negation: a -> b is equivalent to ~a v b.

The last really widespread operator is logical equivalence, which is true if either both a and b are true or both a and b are false. We will write a == b to denote equivalence, following the syntax of C.

In electrical engineering the following operators often appear as well: a NAND b and a NOR b, having the meaning ~(a ^ b) and ~(a v b), respectively. These operators are useful for circuit design since they can be used for emulating AND, OR and NOT, and therefore you can produce masses of them and thus save production costs. I leave it to you as an exercise to find out how AND, OR and NOT can be emulated using either NAND or NOR.

Boolean algebra also knows some laws which can be used to transform or simplify logical equations. For instance, the De Morgan's laws ~(a v b) = ~a ^ ~b and ~(a ^ b) = ~a v ~b. There are also the distributive laws, a ^ (b v c) = (a ^ b) v (a ^ c) and a v (b ^ c) = (a v b) ^ (a v c) and some more complicated laws.

2.2 First-order logic

You cannot express the famous "All men are mortal, Socrate is a man, therefore Socrate is mortal" in propositional logic. You need to extend its syntax. The result is first-order logic. "Being mortal" can be defined as a predicate, let's call it "mortal", and "being a man" can be defined as a predicate as well, let's call it "man". Then you can basically write: "All x for which man(x) have the property that mortal(x), man(Socrate), therefore mortal(Socrate)." Formally this would be written as:

((\forall x man(x) -> mortal(x)) ^ man(Socrate)) -> mortal(Socrate)

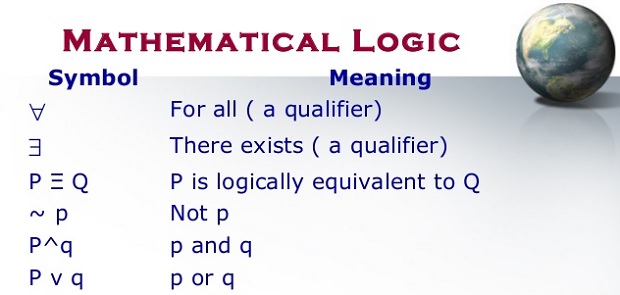

\forall is the TeX command to display the universal quantor symbol. \forall x p(x) means that whatever is x, p(x) applies. In analogy to that \forall x man(x) -> mortal(x) has the meaning that whatever is x, if man(x), then mortal(x).

Apart from \forall first-order logic knows \exists, the existential quantor. \exists x p(x) means that there is at least one x for which p(x) applies. The universal and existential quantors are related to each other in the following way:

~\exists x p(x) == \forall x ~p(x)

\exists x ~p(x) == ~\forall x p(x)

2.3 Second-order logic

In second-order logic predicates themselves can be quantified. So it is possible to make statements such as:

\exists p p(x) ^ ~p(y)

meaning that there is some predicate p for which p(x) applies but not p(y).

2.4 Modal logics

An advanced topic of logic is modal logics. I use the plural form because there are many types of modal logics. All of them have in common that they are about possibilities, and most are defined by Kripke semantics. Kripke semantics assume that there are several possible worlds. Modal logics are extensions of classical logic, and if you just write a classical formula, it means in modal logics that the statement applies to the current world. If you write a statement of the form

\diamond p(x)

this means that p(x) is possible, that is, there is some transition from the current world to another world in which p(x) is valid. Moreover, there is the \box operator:

\box p(x)

has the meaning that there is no transition from the current world to another world in which p(x) is not valid, i.e. it is valid in all worlds to which there is a transition. The two operators are related to each other in the following way:

\diamond p(x) == ~\box ~p(x)

~\diamond ~p(x) == \box p(x)

2.5 Intuitionistic logics

This is a different approach to logic which is NOT compatible to classical logic. The basic idea is that x and ~x have different meanings: While in classical logic x means that x is true and ~x that x is false, in intuitionistic logics x means that x is provable and ~x that x is falsifiable. This has the consequence that the principle of "tertium non datur", i.e. x v ~x always means true, does not apply to intuitionistic logics (in contrast to classical logic). If you cannot prove x, that is not enough to infer that x can be falsified, and if you cannot falsify x, that is not enough to infer that x can be proven. This makes calculation quite different in intuitionistic logics. Some formulae that are valid in classical logic are not provable in intuitionistic logics. I use the plural form again because there are several types of intuitionistic logics, including intuitionistic modal logics.

2.6 Multi-valued logics

An example of this is Fuzzy logic: In such types of logic, there are not only true and false, but many different truth values. This makes computation much more difficult.

3. Theorem Proving

Now about a popular method to prove or falsify logical formulae: sequent calculus. There are various rules how you can transform formulae using it. Basically you start with a formula you want to prove, and then you induce formulae which are premises of the former formulae, i.e. if the premise is true then the conclusion (which at first is the formula you want to prove) is true, too. If you manage to derive an axiom in this way, that is a statement that definitely is true, you have proven the formula.

All the rules that can be used in some of the many variants of sequent calculus can be found at: http://en.wikipedia.org/wiki/Sequent_calculus

There are many other formal methods to prove or falsify theorems, such as the Tableau calculus and resolution. In essence they are, however, similar to sequent calculus. You can find introductory articles on all of these methods at Wikipedia.

Of course there are automated theorem provers that can be run on computers.

4. Conclusion

I hope to have given you a (very) brief overview of some of the areas of modern logics. You can ask me any question related to this subject, I will try to give you an answer as good as I can.

Claus Volko

This article was first published in Hugi Special Edition #4. It is a translation of an article from MATHEMATIQ #1.