Can State of Universe be Stored in Computer?

As the previous article mentioned that it is not clear whether the state of the entire universe can be compressed in such a way that it can be stored in a computer, which itself is part of the universe, I further elaborated on this.

Written by Claus Volko

Vienna, Austria, Europe

Contact: cdvolko (at) gmail (dot) com

Homepage: www.cdvolko.net

This article is a follow-up to my essay "The Universe as Automaton", which I published in WIN ONE, issue 11, from 2013. There, I developed the idea that the state of the universe might be modeled as a three-dimensional matrix and that the entire history of the universe could be represented as a state machine (automaton). In this article I also wrote: "It is not possible to have something that is as large as the entire universe represented by a computer - except, maybe, if it has enough redundancy that a suitable data compression algorithm could be applied." This is what I would like to investigate further now. Can a state of the universe be stored on a computer harddrive? What obstacles are connected with this endeavour?

As a computer is part of the universe, it is obvious that the state of the universe can be stored on its harddrive only if we use some sort of encoding that acts as a data compressor. After all, the file that stores the state of the universe must restore itself upon unpacking since it is part of the universe. In other words, the problem number one is whether it is possible to create a compressed file that, on unpacking, recreates itself, apart from recreating everything else. Unless we answer this question, it makes no sense speculating about the effort required to predict the next state of the universe based on a compressed file containing the current one, and whether this prediction can be done approximately in real-time or whether the universe will be already ahead a couple of states before we finish our prediction.

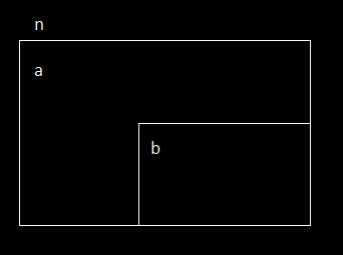

Let us simplify the question. Let us assume a linear memory consisting of n bits, where n = a + b, with a being the number of bits in the memory that are not part of the compressed file (but will be reflected by the contents of the compressed file) and b the space reserved for the compressed file to be created. The compressed file is supposed to have a size of b bits, but its contents must, upon unpacking, amount to the entire memory consisting of n bits. Is this possible?

Figure 1: The entire memory consists of n bits. It is divided into a part of a bits and a part of b bits, where the latter part is reserved for the compressed file which, upon unpacking, must reflect all of the n bits of the entire memory.

There are many compression algorithms used in computer science, one of them being run-length encoding (RLE). So if the data we want to compress consists of a lot of zeros following one another, we can write into the compressed file the digit zero followed by the number of repetitions. If the data consists of a lot of zeros and ones alternating each other, then we can write into the compressed file the number two to signify that it is two different digits that alternate, followed by these digits (zero and one), finally followed by the number of repetitions. If we reserve three bits for the number of digits alternating and ten bits for the number of repetitions, then we can store a sequence of seven bits that is repeated 1023 times in just 3 + 8 + 10 = 21 bits, while in the uncompressed data this repeated sequence would amount to 7161 bits - so we have saved 99.7% of the original space. This shows that, in theory, RLE is a very powerful compression algorithm.

Of course, we must also consider that there might be sequences that cannot be compressed this way. If we wanted to store just one digit (zero or one), we would have to use 1 + 1 + 10 = 12 bits, which is very much considering that a single digit (zero or one) occupies only one bit in the original file. The solution would be to use special codes for turning RLE off and on again, such as a sequence of four digits 0000 and 1111, where 00001111 would be interpreted as four ones and only the next incidence of 1111 would turn RLE on again.

If we do it this way, we might really be able to encode n bits in b bits, where b is less than n. However, there might be a requirement regarding the relation of b to a, i. e. a minimum size. This we would have to further investigate. Also, we must consider that the pointer that points to the data that is to be compressed will sooner or later reach the beginning of b, in which point of time b can be divided into b' (which is the encoded state of a) and c (which has not been written yet and is going to start with the encoded state of b'). As soon as the pointer has reached c, we can further divide it into c' and d in an analogous fashion, and once d is reached, we can divide it into d' and e, and so on. Ultimately, the size of c', d', e', ... must converge to zero, otherwise it will not work. What is the relation of c to b' required to make this work? That is also something that should be investigated.

If we ever reach the state that the rest of the file is about to encode itself - can this be achieved? Yes, it can: We can achieve this if RLE has been turned off. Then the file corresponds to itself. Alas, is it always possible to achieve that once this state is reached, RLE is turned off? Is it also possible to succeed if RLE is turned on at this point of time? Are there any restrictions regarding the remaining space? All of these are highly interesting questions and I will leave it to you to speculate about them.

This article was originally published in IQ Nexus Journal Vol. IX, No. IV, from 2017.

Claus Volko